Your Next Long-Context Recipe: Open-Ended Problems

Coding data lifts reasoning. Agentic coding is dominant. We introduce FrontierCS: 172 open-ended problems with continuous scoring, all one harbor run away. Kimi K2.6 and Claude Opus 4-7 go head-to-head, sustaining up to 456 turns, 405 tool calls, and 531K output tokens per problem.

harbor run away. Two frontier agents producing some of the longest agentic trajectories in any public benchmark, sustaining sessions of up to 456 turns and up to 405 tool calls per problem. A New Source of Long-Context Data

Coding is the de facto standard for measuring model performance because it naturally involves long-context reasoning, iterative refinement, tool use, and persistent state. But most public coding benchmarks still fall short of stress-testing the long-horizon capabilities agents need in real deployments. Frontier-CS aims to alleviate this problem by adding another coding source: open-ended problems. Each ships a domain-specific grader that returns a continuous score, not pass/fail.

What makes them a good long-context source? Three properties:

- No ceiling. Every problem has room to improve. An agent that scores 0.6 today can try for 0.7 tomorrow. There is always room to improve, unless an agent finds a problem’s true optimum, which even humans do not yet know.

- Long-horizon reasoning. Agents sustain sessions of up to 456 turns and 405 tool calls, generating up to 531K output tokens on a single problem. This is not one-shot generation. It is sustained, iterative problem-solving over hundreds of steps.

- Dense feedback signal. The continuous score creates a natural reward landscape. Each intermediate attempt receives a useful signal, giving the agent room to improve through iteration.

What Makes Open-Ended Problems Different

Here’s where open-ended problems split from SWE. SWE tasks are well-defined: a spec, a reference behavior, a verifiable right answer. For open-ended problems, almost everything is unknown except how good the final result is. That is true for both agents and humans. As a result, attempts in many different directions can all be meaningful. Like Ramsey number $R(5,5)$: its true value lies somewhere in $[43, 48]$, and nobody knows the exact number. Any construction that pushes the known lower bound up by even one is a genuine contribution.

How hard are these problems for humans? In our Calico experiment, we placed one open-ended problem in UC Berkeley’s official programming contest with 2,000+ participants. Out of 285 submissions, only one surpassed the strongest AI agent.

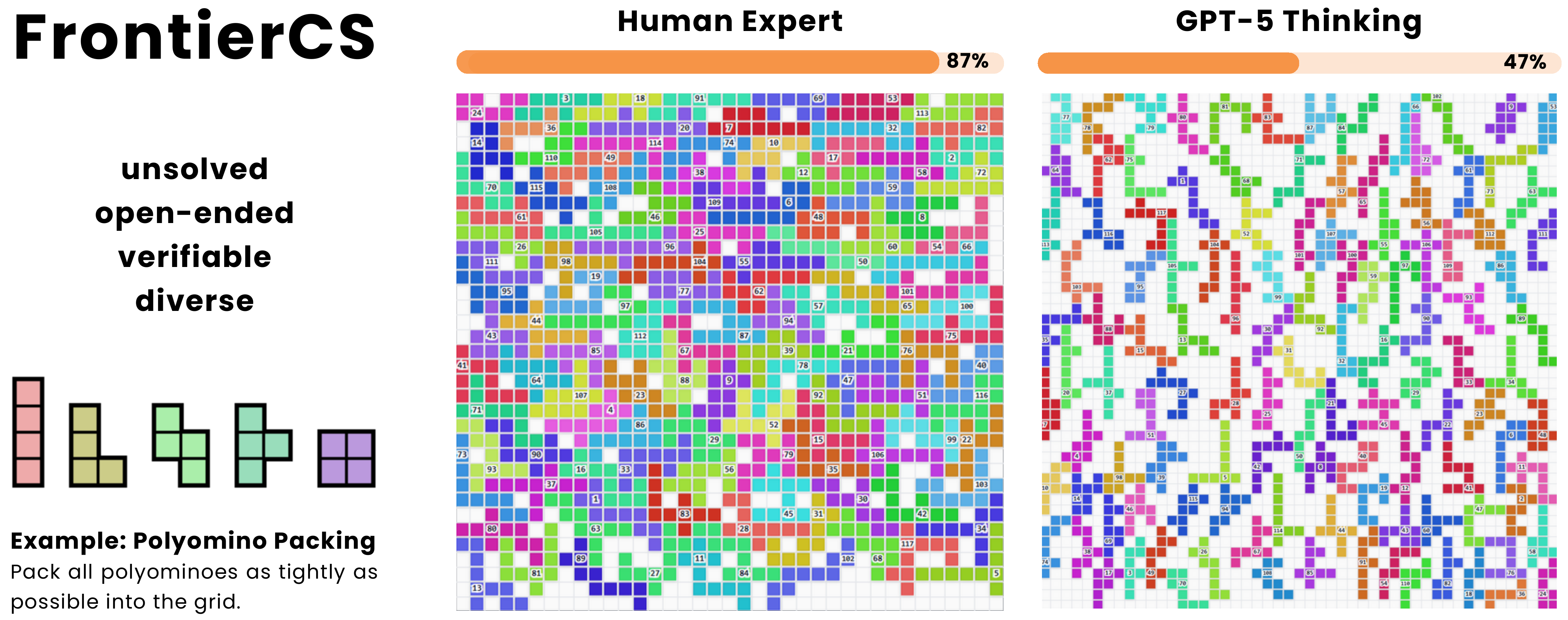

Example: Polyomino Packing

One concrete example from the Frontier-CS benchmark: given a set of polyomino pieces, pack as many as possible into a fixed grid. The grader scores each submission by packing density: a continuous signal, not pass/fail.

The search space is combinatorially vast: piece ordering, rotation, placement strategy, and local compaction all interact. There is no known polynomial-time optimal solution. An agent must iteratively generate code, compile, test, analyze results, and refine its approach across many rounds. This single problem can produce trajectories spanning hundreds of agent turns.

The animation below shows an adaptive evolution agent progressively discovering denser packing strategies through better ordering, symmetry handling, and local compaction across successive iterations:

Empirical Check: Kimi K2.6 vs Claude Opus 4-7

We ran two frontier coding agents on 105 non-interactive algorithmic tasks from Frontier-CS. The results tell two stories: how hard the problems are, and how differently two top agents attack them.

Essentially tied on the scoreboard. Wildly different under the hood.

Two strategies, one scoreboard. Kimi K2.6 thinks on every single step, with up to 404 thinking calls per problem, while using 25% fewer output tokens: 149K vs 200K. Only 8 of its tasks hit the 96K output cap, compared to 17 for Opus. Denser thinking, tighter budget, same result.

Opus compensates with sheer volume: up to 456 turns and up to 531K output tokens on a single problem. Both agents sustain sessions of hundreds of tool calls. This is genuinely long-horizon agentic behavior. Half the problems defeat both agents entirely. These are genuine frontiers.

What does this mean for long-context data? Every one of these 105 runs produces a rich, multi-turn trajectory, the kind of data that is hard to synthesize and expensive to collect from humans. The continuous scoring means each trajectory comes with a natural quality signal. Two different agents attacking the same problem produce structurally different trajectories at the same quality level, giving natural diversity for free.

Different Failure Modes

The 17-vs-8 row in the table above is not noise. Every one of Opus’s 17 crashed trials exits with the same message, Claude's response exceeded the 96000 output token maximum. None of Kimi’s two crashes do. Two case studies make the contrast concrete:

frontier-cs-220 (“Playing Around the Table”) shows the hard failure mode: Opus scored 0 without a single tool call, burning 384K completion tokens in extended thinking before hitting an API error . Kimi instead spread the work over 207 turns, 41 tool calls, and context compaction, reaching 1.000. frontier-cs-0 shows the softer version: Opus self-validated confidently, but all 70 real grader cases timed out; Kimi used a more conservative solver and scored 0.74.

Across the 17 problems where Opus hit the cap, Kimi got positive scores on 8, including 1.000 on cs-220 and 0.994 on cs-87. Drop those 17 zeros from Opus’s average and its mean rises from 35.1 to 41.8, well ahead of Kimi’s 34.0. The headline parity is not Kimi catching up. It is Opus losing points on the hardest problems by hitting a ceiling Kimi’s harness does not have.

172 Problems, One Command

All 172 open-ended problems ship as a Harbor adapter, joining the ~70 benchmarks already on Harbor: SWE-Bench, Terminal-Bench, USACO, GPQA, BFCL, and more. Harbor gives you a standard task format, a standard agent interface, a trial orchestrator, and a provider layer: local Docker, Daytona, Modal, E2B, or GKE.

# Run with any standard agent CLI

uv run harbor run -d frontier-cs-algorithm \

-a claude-code -m "anthropic/claude-opus-4-6"

Plug in Claude Code, OpenHands, Codex CLI, Aider, Terminus, or any agent behind Harbor’s BaseAgent. Each task spins up two isolated services: main for the agent’s workspace, and judge for the upstream go-judge with the real grader. The agent never sees test data or the grader. It only sees the problem statement and its own code.

Design

The isolation is structural. The agent’s mounts are read-only and hold only the problem statement, the workspace guide, and a config file. The judge container holds the test data and the chk.cc grader, but only ever sees one problem directory at a time. The only channel between them is an HTTP submission endpoint that takes a single C++ file and returns a number. There is no shared filesystem to scrape, no environment variable to read the grader out of, no way to swap the verifier mid-trial.

Each trial follows the same five-step path: bring up main and judge, hand the agent the problem statement and let it write, compile, and stress-test inside main, run the verifier, which submits solution.cpp to judge:8081/submit, poll for the result, and write the normalized partial score to /logs/verifier/reward.txt. The agent never speaks to the judge. The verifier is the only thing that does.

For reproducibility, three judge image modes are supported: per-trial build by default, a locally cached frontier-cs-judge:latest, or the published frozen image yanagiorigami/frontier-cs-harbor-judge:latest. The published image is what we pin for the parity numbers below.

Parity With the Native Eval

Does Harbor preserve native scores? We took 10 problems, the subset of the first 15 with a published reference solution, and ran claude-code@2.1.112 with claude-opus-4-6, three runs each, on both sides:

| Side | Partial Score (0-100) |

|---|---|

| Native Frontier-CS eval | 68.92% ± 11.54% |

| Harbor adapter | 53.37% ± 9.88% |

Three things are aligned on purpose: the agent prompt, the agent CLI invocation, and the chk.cc grader inside the go-judge. The adapter’s agent_constants.py mirrors the upstream version; the only intentional difference is that CLAUDE.md is renamed to AGENT.md so the same task runs under non-Claude agents too. The only thing that changes between the two evals is transport: in-process on the native side, HTTP on the Harbor side.

The variance gap mostly comes from runs where the agent ran out of tokens before submitting; per Frontier-CS convention these count as 0. On the full set, the oracle agent, which copies the reference solution, scores 70.23% across every problem with a shipped reference, with 0 harness errors. Per-problem breakdowns and raw run arrays live in the adapter’s parity_experiment.json.

Try It

The adapter is already available in the Harbor monorepo and the dataset is published on the Harbor registry:

# Sanity check with the oracle agent

uv run harbor run -d frontier-cs-algorithm

# A real run with Claude Code

uv run harbor run -d frontier-cs-algorithm \

-a claude-code -m "anthropic/claude-opus-4-6"

# Or generate the tasks yourself from any Frontier-CS checkout

cd adapters/frontier-cs-algorithm

uv run frontier-cs-algorithm \

--source https://github.com/FrontierCS/Frontier-CS.git \

--output-dir ../../datasets/frontier-cs-algorithm \

--use-published-judge

Pointers:

- Harbor task registry:

yanagiorigami/frontier-cs: the published dataset - Adapter source: Harbor monorepo

- Upstream Frontier-CS: problems, graders, paper

- Parity artifacts: Hugging Face

Algorithmic is live today, and the rest of the Frontier-CS tracks are next on the list. Ping us on Discord if you hit anything weird, want to contribute a new agent comparison, or just want to chat about open-ended evals.