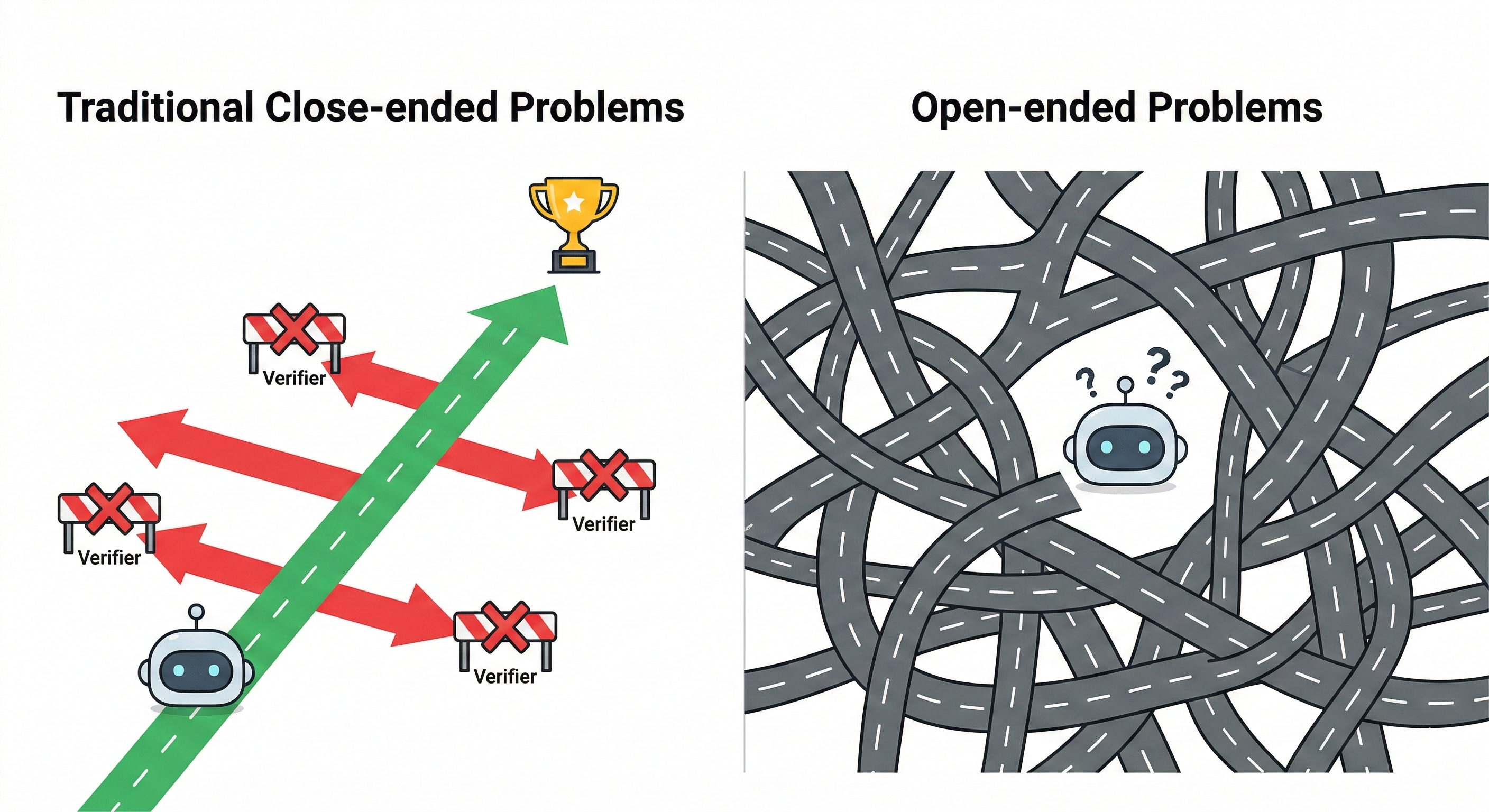

Your Next Long-Context Recipe: Open-Ended Problems

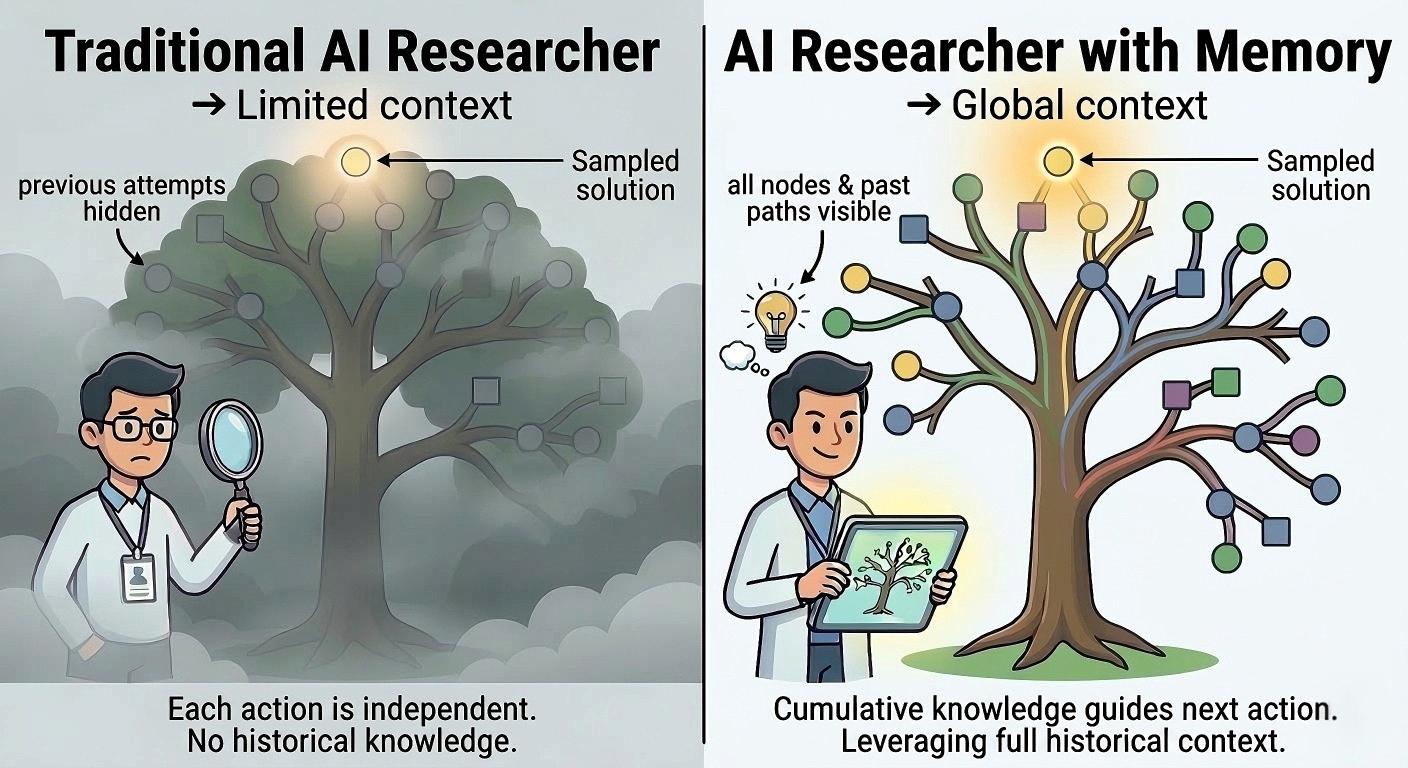

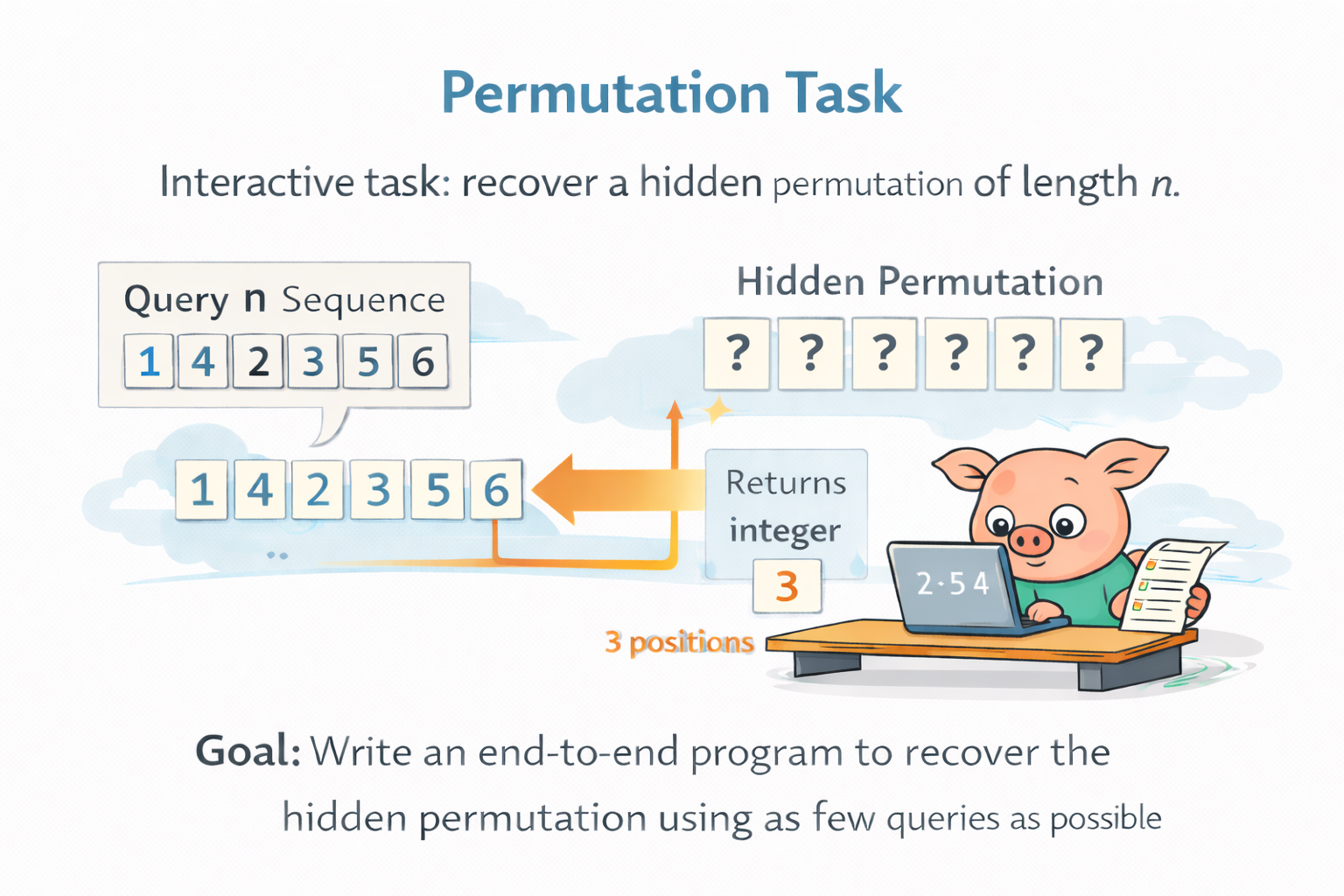

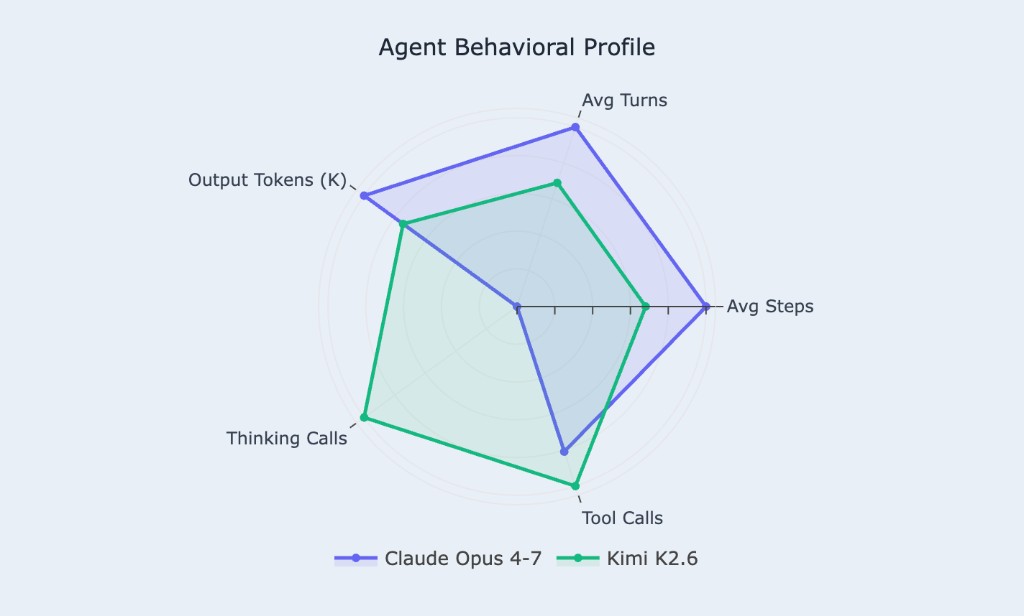

Coding data lifts reasoning. Agentic coding is dominant. We introduce FrontierCS: 172 open-ended problems with continuous scoring, all one harbor run away. Kimi K2.6 and Claude Opus 4-7 go head-to-head, sustaining up to 456 turns, 405 tool calls, and 531K output tokens per problem.

Read more →